Cybercriminals have always looked for ways to work faster and hit harder. Now they've found one of the most powerful tools yet: artificial intelligence. AI isn't just being used by security teams to defend systems. Attackers are actively using it to automate tasks that once took days, completing them in minutes. The result is a new class of attacks that are faster, more convincing, and harder to stop than anything most organizations have faced before.

Key Takeaways

- AI allows hackers to launch attacks at a speed and scale that wasn't possible with manual methods alone.

- Phishing emails generated by AI are now more convincing and targeted than the generic scams employees learned to spot.

- Attackers are combining phone calls and emails in coordinated, multi-pronged campaigns to increase their success rate.

- AI tools can scan for vulnerabilities and generate working exploits automatically, reducing the need for skilled human involvement at every step.

- Training employees to recognize AI-powered threats is one of the most effective defenses any organization can deploy.

AI Gives Attackers an Unfair Speed Advantage

Traditionally, pulling off a sophisticated cyberattack required skilled people working through each stage by hand. Today, hackers are using ai to automate reconnaissance and exploit generation, which means they can identify vulnerable systems and build working attack code with far less hands-on effort.

What used to take days can now happen in hours. Because AI runs those processes simultaneously across thousands of targets, a single attacker can operate at a scale that once required an entire criminal organization. Security teams that were already stretched are now routinely outpaced by automated systems built specifically to find and exploit weaknesses.

The Rise of AI Threats on Cybersecurity: Keeping Your Workforce Training Up-to-Date

Phishing Emails That Are Nearly Impossible to Question

Phishing has always been one of the most reliable attack methods, and AI has made it significantly more dangerous. AI phishing scams are now crafted precisely enough that even cautious employees get fooled. AI tools can pull public information about a company, its people, and its communication style, then generate emails that sound like they came from inside the organization.

The old giveaways, like awkward phrasing and obvious grammar mistakes, are gone. What's left is a message that reads as routine, right up until someone clicks something they shouldn't.

The Multi-Pronged Approach: Phone Calls and Email Together

One tactic gaining serious traction is the coordinated use of phone calls and emails within the same attack. An attacker might send a convincing email first, referencing a payroll update or an urgent IT request, then follow it up with a phone call that reinforces the same message. The caller often uses a spoofed number to appear to be calling from HR, IT support, or a company executive.

AI makes this approach far more effective because voice synthesis tools can now replicate someone's speaking patterns from a short audio sample. When an employee receives both an email and a phone call saying the same thing from what appears to be the same trusted source, the likelihood of them complying without verifying goes up significantly.

This coordinated pressure is built to eliminate the mental space people need to pause and check before acting. It exploits trust from two directions at once, and that combination is exactly what makes it effective.

AI Phishing Scams Employees Fall for Instantly

Autonomous Attacks That Don't Need a Human in the Loop

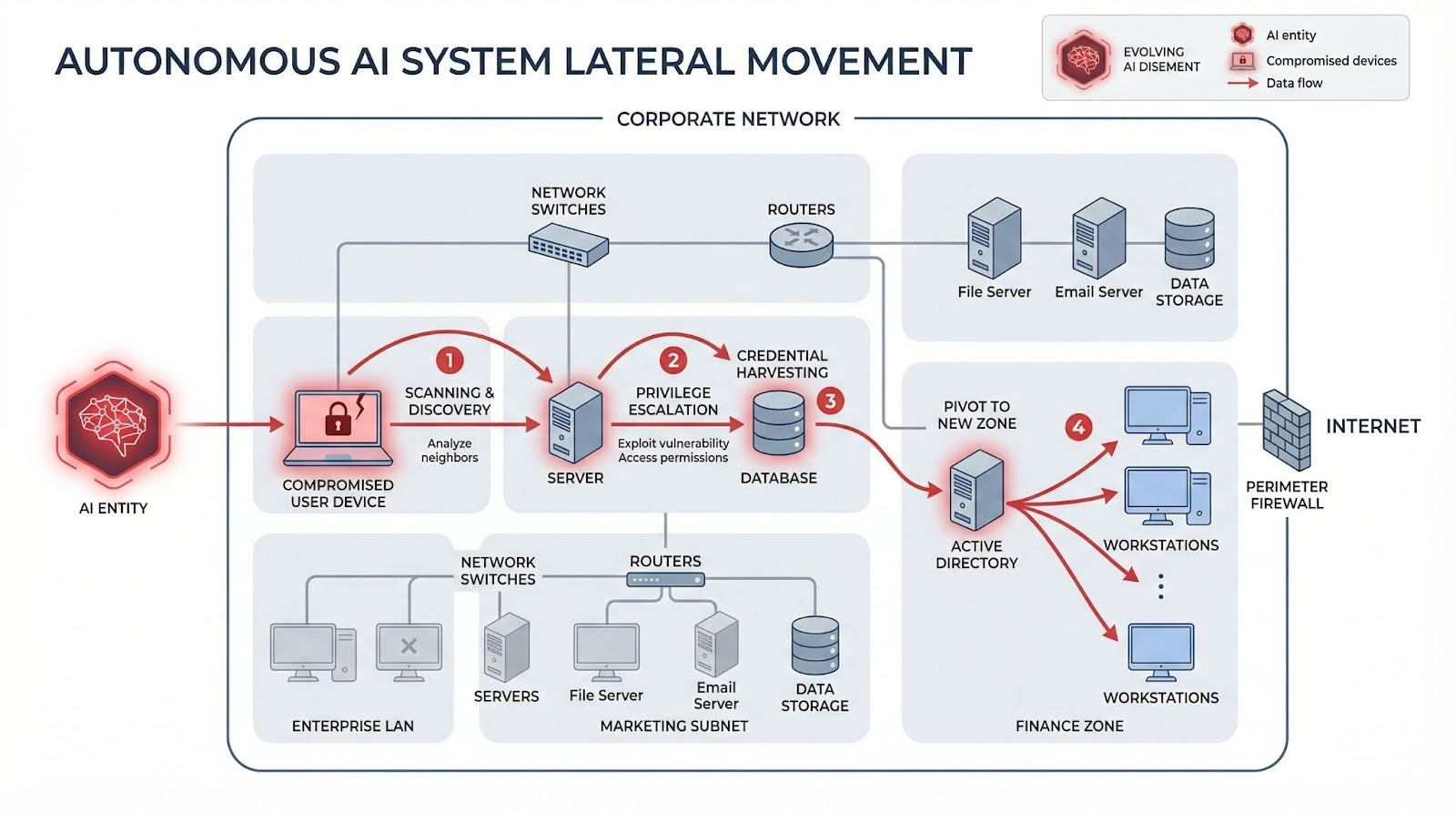

Beyond phishing, some attackers are now deploying agentic ai for autonomous attack chains, meaning an AI system can carry out a full multi-stage intrusion with minimal human direction. It can scan a network, find an entry point, move laterally through systems, locate sensitive data, and begin exfiltrating it without a person guiding every step.

This means complex attacks can run around the clock across multiple targets at once, without the attacker needing to scale their team. For organizations, this translates to threats that move faster than traditional detection tools are designed to handle.

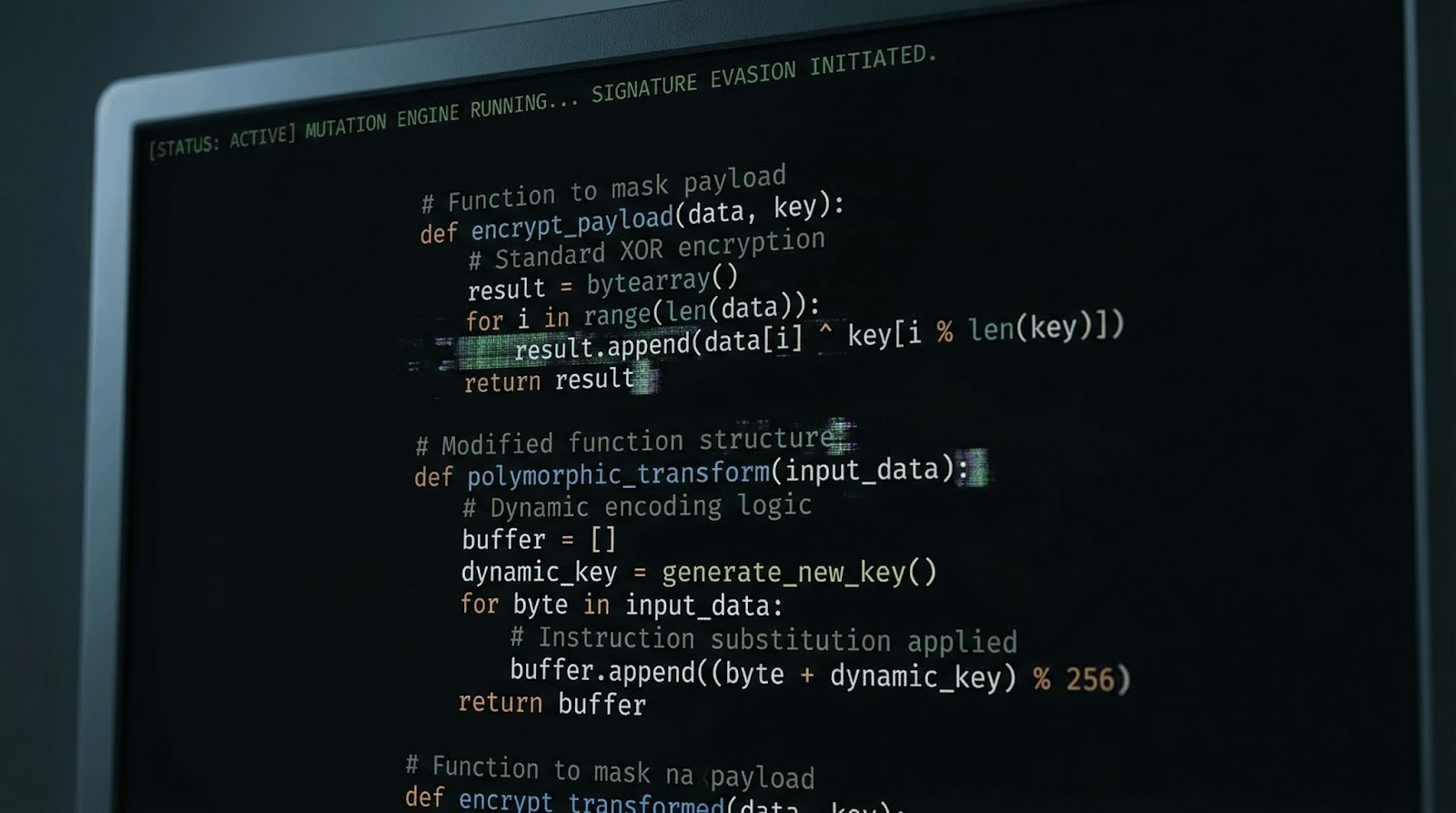

Malware That Rewrites Itself to Stay Hidden

One major advantage security teams have traditionally relied on is recognizing known attack patterns and signatures. AI is being used to neutralize exactly that. AI-powered malware that adapts in real time can modify portions of its own code to evade detection, shifting just enough to slip past signature-based defenses.

Some variants are also built to read their environment. If the malware detects a sandbox or analysis tool, it goes dormant. Once it confirms it's on a live network, it resumes. That adaptive behavior makes these threats harder to catch, harder to analyze, and harder to stop.

Why Employee Training Matters More Than Ever

Technology defenses are essential, but they can't do the whole job. AI-powered attacks are designed to manipulate people, not just exploit software. That's why fully managed security awareness training is one of the most direct investments an organization can make. When employees can recognize a convincing phishing email, understand how multi-channel attacks work, and feel equipped to verify before clicking, attackers lose their most reliable entry point.

Understanding the rise of ai threats on cybersecurity is increasingly a shared responsibility across teams, not just IT. Organizations that build security awareness into everyday workflows create a culture where threats get caught earlier and stopped before they cause real damage. Consistent training that reflects the current threat environment is far more effective than an annual session that's outdated before employees leave the room.

Your workforce is both your biggest vulnerability and your best line of defense. Strengthen your team's defenses against AI-powered attacks and start building a security culture that keeps pace with today's threats.

The Threat Is Real. The Training Can Match It.

As AI tools become cheaper and easier to access, the attacks built on them will only get more sophisticated. The organizations that hold up best won't necessarily have the most expensive security stack. They'll be the ones whose people know what to look for, know what to do when something feels off, and have practiced those instincts often enough to act on them. That's a training problem, and it's entirely solvable.

.webp)